NVDA: The supercycle everyone keeps calling dead just hit $215.9 billion

NVIDIA Corporation - Q4 FY2026 earnings deep-dive

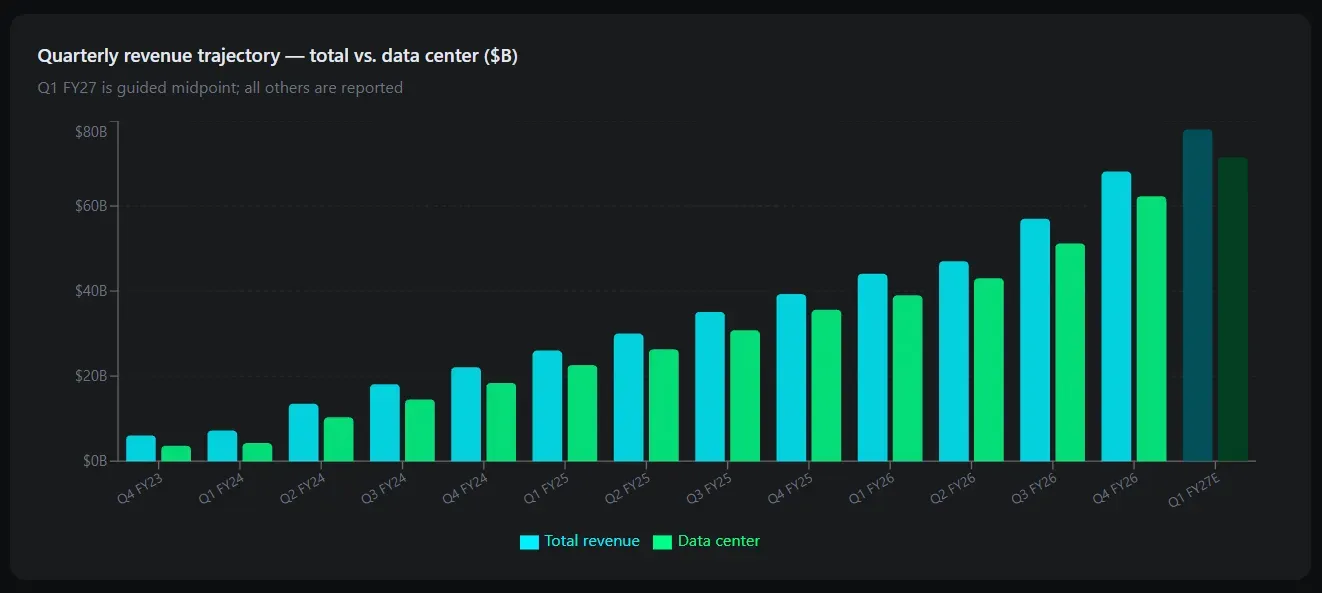

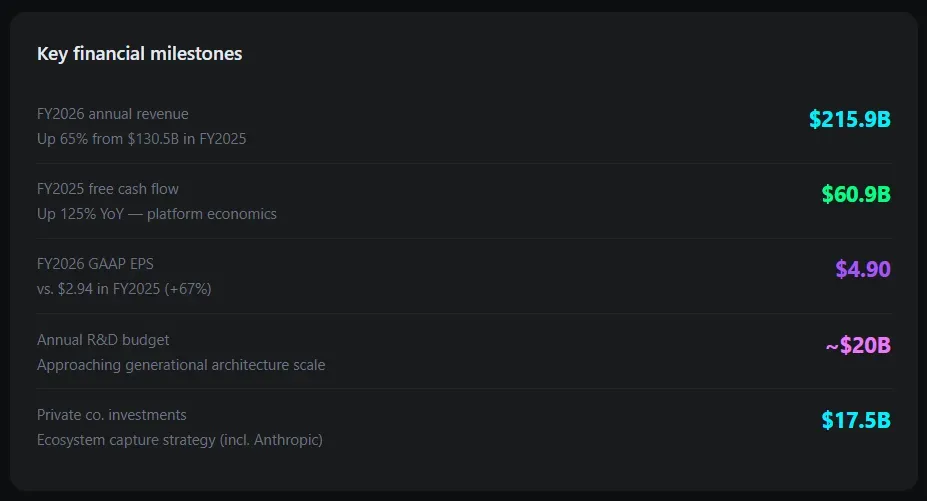

I have been listening to AI bubble warnings for 2 years. The bears have been wrong every quarter. NVIDIA just reported fiscal year 2026 revenue of $215.9 billion - up 65% from the prior year - and guided Q1 FY2027 to $78.0 billion, blowing past the $72.6 billion analyst consensus by more than $5 billion. The market is still trying to decide whether this is a peak. I believe it is not, and here is why.

The structural argument is simple: AI infrastructure is entering a second wave. Training scaling laws drove the first wave. Inference scaling - the idea that 'thinking longer produces smarter answers,' as Jensen Huang put it on the earnings call - opens an entirely new and potentially larger compute market. That is before agentic AI and physical AI (robotics, autonomous vehicles) are counted. I added to my position after this print.

Fundamental breakdown

Q4 FY2026 headline numbers

The data center print is extraordinary. At $62.3 billion for a single quarter - up 75% year-over-year and 22% sequentially - NVIDIA is generating more quarterly data center revenue than many Fortune 500 companies produce in an entire fiscal year.

Networking was the surprise standout at $10.98 billion, up 263% year-over-year, driven by NVLink adoption and Spectrum-X Ethernet. The gaming shortfall is a known story: memory constraints force supply allocation toward AI accelerators, and NVIDIA may skip a gaming GPU launch cycle entirely in 2026.

Full-year FY2026 scorecard

Significant firepower

The sovereign AI number deserves attention. Revenue from countries building national AI infrastructure more than tripled to over $30 billion in FY2026. Canada, France, Netherlands, Singapore, and the UK were named as primary customers. This is a demand vector that did not meaningfully exist two years ago and represents a durable, government-backed spending category that is less cyclical than private enterprise CapEx.

Revenue quality and growth durability

I care less about the beat magnitude than about what the revenue is made of. Three qualities stand out in this print.

First, customer diversification is improving. Hyperscalers - Alphabet, Amazon, Meta, Microsoft - account for just over 50% of data center revenue. That means nearly half now comes from sovereign entities, enterprises, and model builders. A year ago the hyperscaler concentration was a legitimate concern. It is a diminishing one.

Second, the upgrade cycle is structurally locked in. NVIDIA shipped the first Vera Rubin samples to customers earlier this week. Rubin promises 10x lower inference costs than Blackwell. When a new architecture promises 10x cost improvements, every data center operator on the planet has a business case to rip and replace. That creates a multi-year capex commitment cycle where NVIDIA is the essential vendor.

Third, inference demand is additive, not substitutive. The bear case assumed that cheaper inference (via DeepSeek-class efficiency models) would reduce GPU demand. The opposite appears to be occurring: as inference becomes cheaper, usage volumes expand superlinearly, absorbing and exceeding the efficiency gains. Jensen Huang described this directly on the call - inference scaling adds a second law driving perpetual compute demand.

Operating leverage and margin trajectory

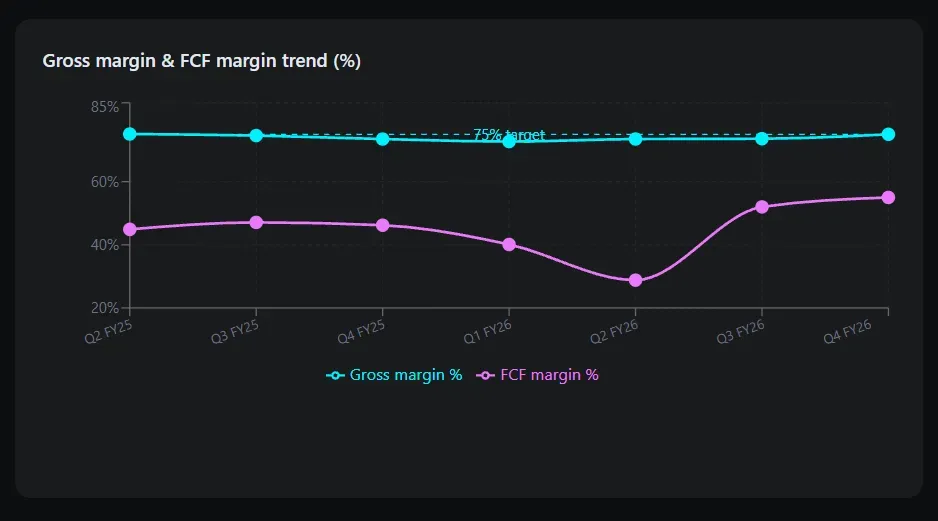

Gross margin stability at 75% non-GAAP is the single most important number in this report. NVIDIA is selling roughly $68 billion per quarter in revenue and retaining three-quarters of it as gross profit. This is not typical semiconductor economics. It is platform economics.

Sustaining mid-70s gross margins during a generation transition (Blackwell to Rubin) is the challenge Jensen addressed directly on the call. His answer: 'The single most important lever is delivering generational leaps to our customers.' So long as each architecture offers dramatically superior performance per watt, customers pay premium prices, and margins hold. This has worked for five consecutive architecture generations.

Operating leverage is visible in the numbers. R&D spend is approaching $20 billion annually - not cheap - but revenue growth is outpacing cost growth by a wide margin.

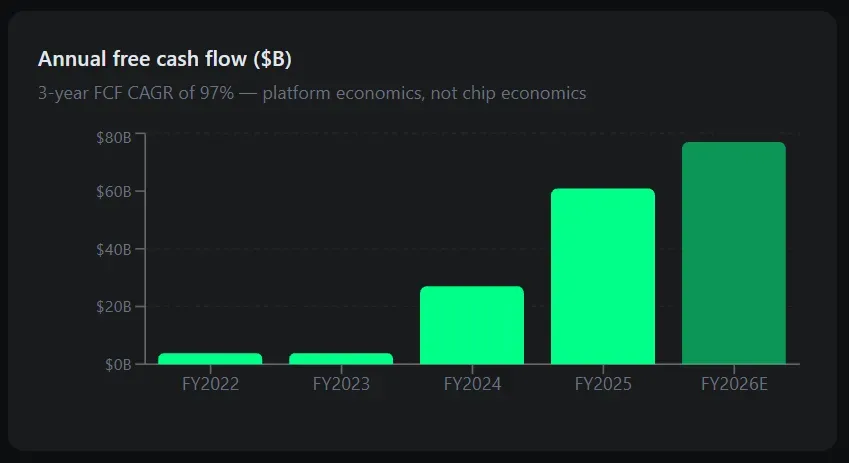

Free cash flow for FY2025 reached $60.9 billion, up 125% year-over-year, and FY2026 FCF is tracking even higher, with one estimate placing it above $65 billion. The FCF yield at current market cap is approximately 1.7%, which is compressed but not irrational given the growth rate.

One legitimate concern: NVIDIA changed its non-GAAP definition beginning Q1 FY2027 to include stock-based compensation. This increases transparency and should be applauded - but it will mechanically lower reported non-GAAP EPS by approximately 10 basis points per year. Investors should adjust models accordingly.

Competitive moat - why CUDA is not a product

The bull case on NVIDIA is often presented as a chip story. I think that is wrong. NVIDIA's moat is CUDA - a software ecosystem representing 15+ years of developer investment, optimized libraries, and workflow integrations that cannot be replicated by throwing money at the problem.

AMD offers competitive hardware on paper. Google's TPUs are purpose-built and cost-effective for specific workloads. Amazon Trainium, Microsoft Maia, Meta MTIA - the hyperscalers are all developing custom silicon. None of these have meaningfully dented NVIDIA's data center market share. The reason is not chip performance. It is that the entire AI development stack- PyTorch, TensorFlow, every major framework, every inference optimization library - is tuned first for CUDA. Switching costs are enormous.

NVIDIA is also investing $17.5 billion in private AI companies and infrastructure funds in FY2026 alone. This is not philanthropy. It is ecosystem capture. Every startup that takes NVIDIA capital builds on NVIDIA infrastructure. The $10 billion commitment in Anthropic and deepened OpenAI engagement are not financial investments - they are moat-widening exercises.

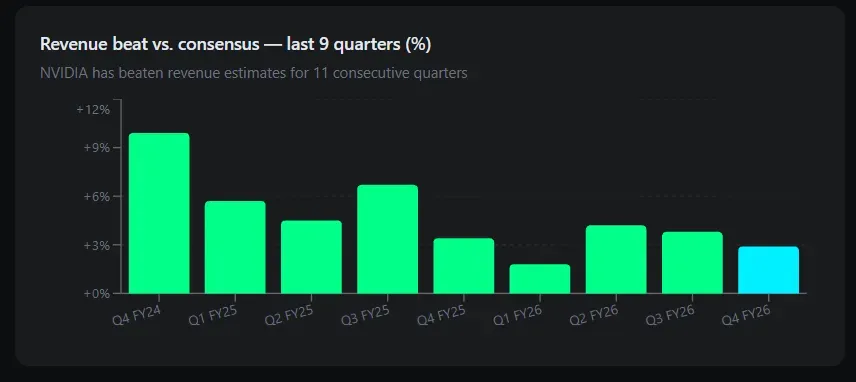

Management credibility - the guidance track record

I track management credibility through the beat-and-raise cycle. NVIDIA has now delivered 11 consecutive quarters of revenue growth exceeding 55%. More importantly, they have consistently underpromised and overdelivered on guidance - Q4 FY2026 was guided at $65 billion and came in at $68.1 billion. Q1 FY2027 was guided at $78.0 billion against a $72.6 billion consensus.

Jensen Huang has earned credibility with a specific kind of forward communication: he describes demand qualitatively in ways that prove directionally accurate. 'Blackwell sales are off the charts' last quarter preceded a $62.3 billion data center print. 'Reasoning AI adds another scaling law' this quarter is a thesis worth taking seriously given the track record.

CFO Colette Kress deserves equal credit. Her CFO commentary is unusually direct about headwinds- she named the China revenue exclusion in guidance explicitly, flagged memory constraint headwinds to gaming, and disclosed the non-GAAP methodology change proactively. This is not a management team that hides problems in footnotes.

The AI bubble question - a real risk, not a dismissal

Why the bubble concern is legitimate

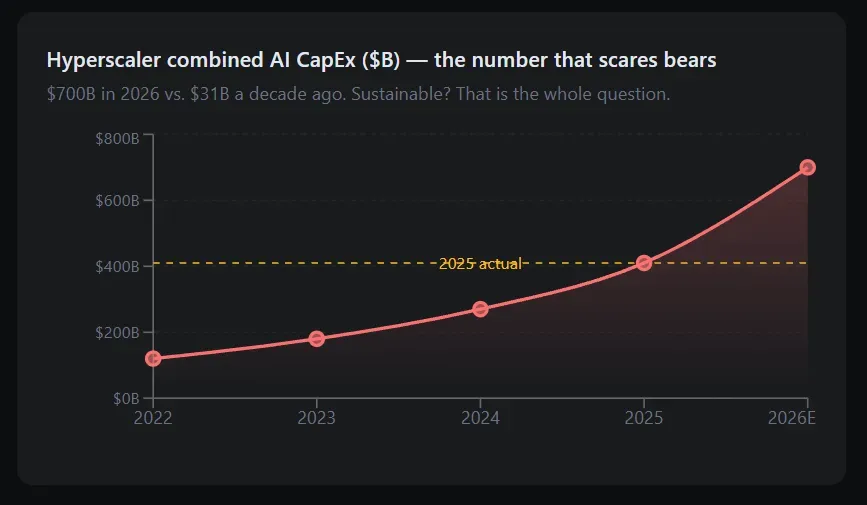

I take the AI bubble risk seriously. Bridgewater's Greg Jensen described the current phase as 'more dangerous' — exponentially rising physical infrastructure investment funded increasingly by outside capital. The top five hyperscalers are projected to invest approximately $700 billion in AI infrastructure in 2026, up from $410 billion in 2025. At some point, this capital must generate returns that justify the investment.

The uncomfortable reality: circular AI economics exist. Cloud providers buy NVIDIA GPUs, build AI services, sell capacity to model labs and startups, who then buy more GPU time. This self-reinforcing loop can persist as long as external capital flows in — but it means a meaningful portion of NVIDIA's revenue is ultimately funded by venture capital and equity markets rather than by genuine end-user demand.

Bubble indicator -- Current signal

Hyperscaler CapEx growth: $410B (2025) → ~$700B (2026) - steep but tied to product revenue

Revenue vs. capacity utilization: Hyperscalers report strong utilization; not ghost data centers yet

Circular capital dependency: Model labs and startups partially funded by VC - genuine risk

Valuation premium: NVDA PEG ratio ~0.8x - attractive relative to growth, not bubble-extreme

Customer concentration: Hyperscalers >50% of DC revenue -still high, improving

Competitive response timeline: Custom silicon 2–3 years from meaningful share displacement

What a bubble correction actually looks like for NVDA

If AI CapEx peaks in 2026 - the bear case - the question is not whether NVIDIA falls, but by how much and for how long. I model three scenarios.

Scenario A - soft landing. CapEx growth slows from 70% to 20–25% annually as hyperscalers digest installed infrastructure. NVIDIA revenue growth decelerates to 20–30% per year. This is not a disaster. It is a normalization. The stock probably re-rates down to 30–35x forward earnings, implying a 25–35% drawdown from current levels before resuming growth. Recovery timeline: 12–18 months.

Scenario B — hard landing. A meaningful AI winter - where foundation model quality plateaus, enterprise adoption stalls, and hyperscaler utilization rates disappoint - would see CapEx cuts of 30–40%. NVIDIA revenue could decline 25–35% from peak. At 20x trough earnings, the stock could retrace to the $90–$110 range. This happened in semiconductors before (2001, 2009, 2019 trade war). Recovery timeline: 24–36 months.

Scenario C - catastrophic regulatory action. Antitrust break-up of hyperscalers, extreme export controls, or forced open-sourcing of CUDA. Low probability but existential. Not my base case.

The structural demand from sovereign AI, enterprise digital transformation, and inference scaling is real enough that a full cycle reset seems unlikely in the near term. But owning NVIDIA requires accepting that a 30–40% drawdown is a normal event in the semiconductor cycle, and that the stock has already experienced a 3-month correction from its $212 peak.

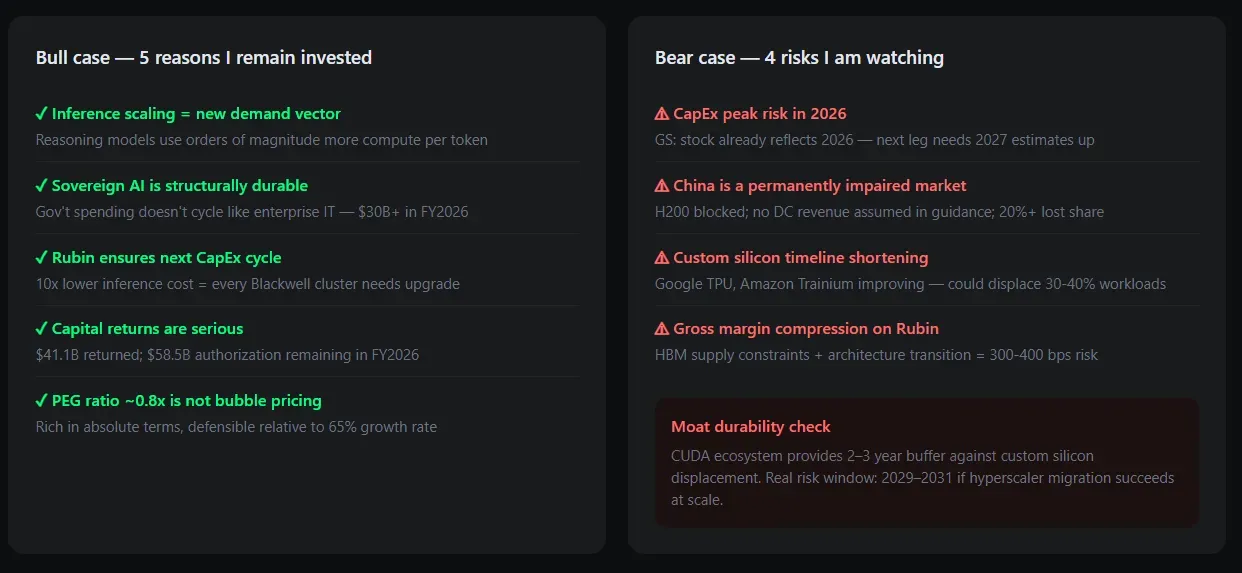

Bull case: 5reasons I remain invested

- Inference scaling is a new, independent growth vector. Reasoning models (o3-class) require orders of magnitude more compute per token generated. As these models become the production standard for enterprise AI, inference CapEx replaces training CapEx without cannibalizing it.

- Sovereign AI is structurally durable. Governments do not cut AI infrastructure budgets the way enterprise IT departments do. The $30+ billion sovereign AI revenue in FY2026 is backed by national strategic objectives — this demand persists through private sector downturns.

- Rubin architecture ensures a two-year head start on the next cycle. Samples shipped this week; production ramp in H2 2026. At 10x lower inference cost, every existing Blackwell cluster has a business case for upgrade. This locks in the next CapEx cycle regardless of aggregate AI spending trends.

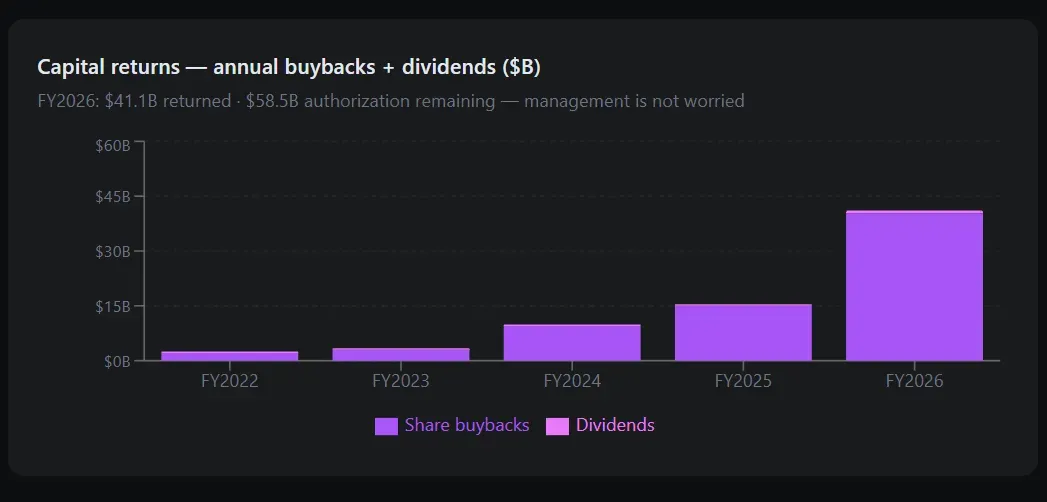

- Capital returns are serious. $41.1 billion returned in FY2026 through buybacks and dividends. $58.5 billion remaining authorization. At current FCF generation, NVIDIA can sustain aggressive buybacks while funding $20 billion in annual R&D — few companies in history have had this luxury.

- PEG ratio of 0.8x is not a bubble multiple. At $4.6 trillion market cap and FCF of approximately $77 billion trailing, the stock is not cheap in absolute terms — but relative to a 65% growth rate and a 97% three-year FCF CAGR, the valuation is defensible for a long-horizon investor.

Bear case - four risks I am watching

- CapEx peak risk in 2026. If hyperscaler AI spending plateaus or reverses, NVIDIA's 73% year-over-year revenue growth mathematically cannot continue. Goldman Sachs noted that current stock prices already reflect 2026 growth - the next leg requires 2027 estimates to move up, not just 2026 delivery.

- China is a permanently impaired market. Meaningful data center revenue from China is explicitly excluded from guidance. Beijing has blocked H200 imports; export restrictions on high-end chips appear permanent. China was once a 20%+ revenue contributor. That demand does not return regardless of diplomatic conditions.

- Custom silicon is improving and the timeline is shortening. Google TPUs, Amazon Trainium, and Meta MTIA are no longer 'not ready' - they are 'not ready for general workloads.' Each generation narrows the gap. If one hyperscaler (most likely Google) successfully migrates 30–40% of workloads to internal silicon, NVIDIA loses the most important price discovery signal in the market.

- Gross margin compression on Rubin transition. Jensen's answer on margin sustainability - 'delivering generational leaps' - is philosophically correct but operationally uncertain. Every architecture transition carries yield risk, memory shortage risk (HBM supply is already constrained), and pricing pressure risk as Blackwell supply normalizes. A 300–400 basis point gross margin compression would materially reset earnings estimates.

Investment thesis: 24–36 month horizon

I do not offer a day-trade plan here. What I will say is this: NVIDIA is one of three or four companies in history that has simultaneously dominated hardware, software, and ecosystem development in a transformational compute transition. The prior examples — Intel in x86 (1990s), Cisco in networking (1990s), Qualcomm in mobile (2000s) — all saw stock prices that significantly overshot and undershot fair value at different points in the cycle. NVIDIA will not be an exception to that pattern.

My 24–36 month investment thesis rests on three convictions. First, the inference scaling law is real and will drive a 5–7 year infrastructure buildout cycle that outlasts any 12-month CapEx normalization. Second, sovereign AI spending is a new structural demand category that did not exist at scale in 2023 and will grow proportionally with GDP globally. Third, CUDA's moat is durable for at least two more architecture generations — meaning until at least 2029–2030 before genuine share displacement risk becomes primary.

The honest caveat: I am buying a company trading at $4.6 trillion market cap. That is not a hidden gem. It is a bet that the AI compute infrastructure buildout is in inning three of nine, not inning seven of nine. If I am wrong about the inning, the drawdown will be painful. I size accordingly — meaningful but not a single-stock concentration bet. A 30–40% correction is a real scenario and a long-term investor should be prepared to add into it, not sell into it.

Investment parameter

12-month price target (analyst consensus): $203–$218 range

$203–$218 range

24-month thesis: Inference scaling + sovereign AI drives continued Data Center growth

36-month upside case: Rubin ramp + enterprise AI adoption = $300+ range on 30x FY28E EPS

Base case drawdown risk: 30–40% correction if CapEx normalization narrative takes hold

Hard stop on thesis: Two consecutive quarters of Data Center revenue decline

Position sizing: Meaningful but not concentrated - account for semiconductor cycle volatility

When to add: Any pullback toward $150–$165 represents generational accumulation zone.

Be the first to comment

Publish your first comment to unleash the wisdom of crowd.